Are We Testing or Monitoring?

Testing or Monitoring — Which One’s Guiding You?

My early career was in an era of “testing days.” Back in the late '80s and early '90s, field sport testing meant stop everything — stop the gym, stop the running, stop the ball work. We're testing today.

It was a ritual. A moment where we all held our breath and hoped the results reflected our effort and planning. But here's the problem: life — and sport — doesn't happen in isolated snapshots. And neither does performance.

In contrast, during that same period, I was also cutting my teeth in the swimming world, where things were different. Swimming was already using a more integrated form of monitoring: lactates, heart rate, stroke frequency, lap times — data collected regularly and embedded in training.

That distinction stuck with me. And it still drives my philosophies today.

Testing vs. Monitoring: What’s the Difference?

The difference between testing and monitoring really comes down to frequency and intent.

Testing is intermittent. You stop, measure, and analyse. A single point in time.

Monitoring is ongoing. You're capturing clues every day, tracking changes over time.

Both have value within a system, but they need to be defined.

Testing provides a lag indicator. These are historical reflections. They tell you what happened. Think of them like rearview mirrors. Once you have the data it may be 6 months until your next test, so evaluating program adaptations is impossible.

Monitoring gives you lead indicators. These point forward. They offer the potential to model or forecast performance, allowing the coach to make calculated changes to the program “on the run” and guide decisions before it’s too late.

The Limits of Testing

A singe test at an isolated point of time is fraught with risk. Take a 2K time trial in the AFL. I have heard this more than I care to remember:

“I didn’t feel great that day. I could’ve gone harder.”

Well, then what have we really learned?

When athletes can manipulate effort — consciously or not — the validity of the result is compromised. If we’re honest, we know this happens. And if we know it happens, we can’t trust the result as the sole marker of performance.

That’s why I’ve long leaned toward the idea that training is testing, and testing is training. The best data is collected when athletes don’t know they’re being measured. It's authentic. It's live. And it reflects real effort.

But that’s only true if the data is good. Poor monitoring data puts us right back where we started — questioning the outcome, uncertain what to believe.

So what's the answer?

Toward a Balanced System

A mature system balances both approaches.

Testing windows give us structure and the chance to validate.

Monitoring streams give us context and continuity.

But neither works without the right mindset. And the right mindset starts with this:

We're not collecting data. We're collecting clues.

Performance is a mystery. Our job isn’t to “know” because we can’t know all the features of the human condition that lead to performance — our job is to improve the odds that we’re heading in the right direction. That means solving problems like scientists and reasoning through them like clinicians.

Here’s how I approach it.

A 4-Point System for Better Decision-Making

1. Define the Question

What exactly are you trying to answer? Write it down. If you're trying to evaluate game performance, say so. If you're modelling performance data, that's different. Be specific. Ambiguity here leads to poor communication across your team — from sports science to medical to coaching. Without clarity on the question, no amount of data will help.

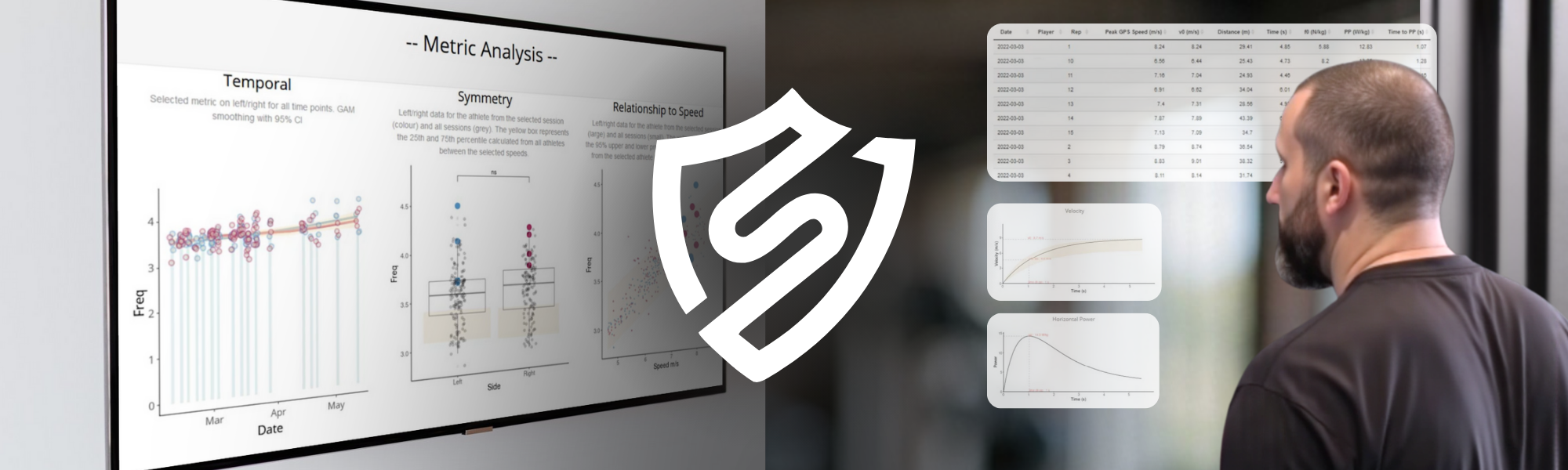

2. Define Each Data Stream

What does each variable measure, and how does it relate to the question? Just because data is available doesn’t mean it’s useful. GPS, for example, is powerful — but it’s not a magic box. You have to understand what each variable tells you and why it matters in your specific context.

3. Eliminate Collinearity

If two variables measure the same thing, drop one. Here’s the rule:

If the correlation between two variables is above 0.8, keep one and dump one.

Collinearity introduces bias. It makes one theme look more important than it is simply because you've captured it twice. It’s one of the most common errors I see, and one of the easiest to fix.

Choose the one that best explains your dependent variable — the thing you’re trying to predict or improve. Use basic correlation statistics. If you can’t run simple correlation or regression, make sure someone in your department can.

4. Don’t Throw the Baby Out With the Bathwater

New doesn’t always mean better. I remember the hype around new “duration-specific” running intensity metrics. The buzz was that this was the next big thing. But when I compared it to what we were already doing — breaking down average speeds by rotation and drill — the overlap was almost perfect (R² = 0.87).

Changing the system for the sake of novelty is a waste of time — and undermines confidence from your coaching staff. Always cross-check new metrics against your current process. If the new variable doesn’t add meaningful signal, don’t adopt it.

Build your system slowly. Build your philosophy over time.

Every sport is different. Field hockey, AFL, NFL — they each demand their own strategy. Your sports science system needs a guiding philosophy. Otherwise, you’re just reacting to data without purpose.

So here’s the question I’ll leave you with:

Are you collecting numbers, or are you solving problems?

Because in the end, it’s not about the test or the tool. It’s about knowing what you’re trying to uncover — and whether your system is built to help you find it.